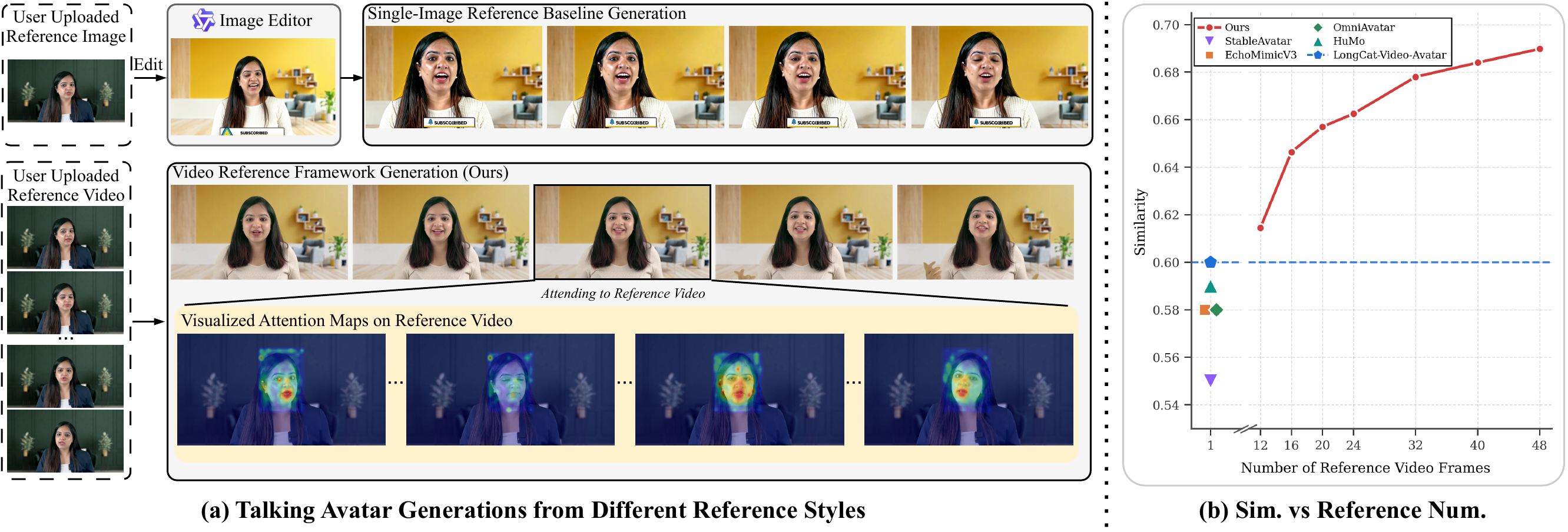

Talking avatar generation from video reference. (a) Visual comparisons demonstrate that our video reference framework yields significantly better identity preservation compared to the single-image baseline in cross-scene generation. The heatmaps reveal that our method selectively aggregates salient identity cues (e.g., lip shapes and facial silhouettes) from highly correlated frames, while naturally suppressing frames with mismatched poses and expressions. (b) The plot shows that our identity similarity increases with the number of reference frames, confirming that longer reference input is advantageous.